My father, born in 1902, lived his life during the vacuum tube era of electronics. Vacuum tubes had many progenitors, such as Nicola Tesla, Thomas Edison, and other pioneers, instrumental in getting this technology off the ground. For example, Britain’s Sir John Ambrose Fleming invented the first diode tube in 1904, while American Lee de Forest invented the first practical amplifier vacuum tube in 1906.

The idea is quite simple. A tiny electric current from your microphone can control a heavy current going to your loudspeaker. That’s called an amplifier. Unfortunately, the vacuum tubes required a red hot heating element to function, and that was their Achilles heel as these tubes tended to burn out and require replacement. Every drug store had a “tube tester” and a supply of vacuum tubes for those who wanted to do their repairs. Regrettably, this flaw (burnout) precluded their use in the design of computers.

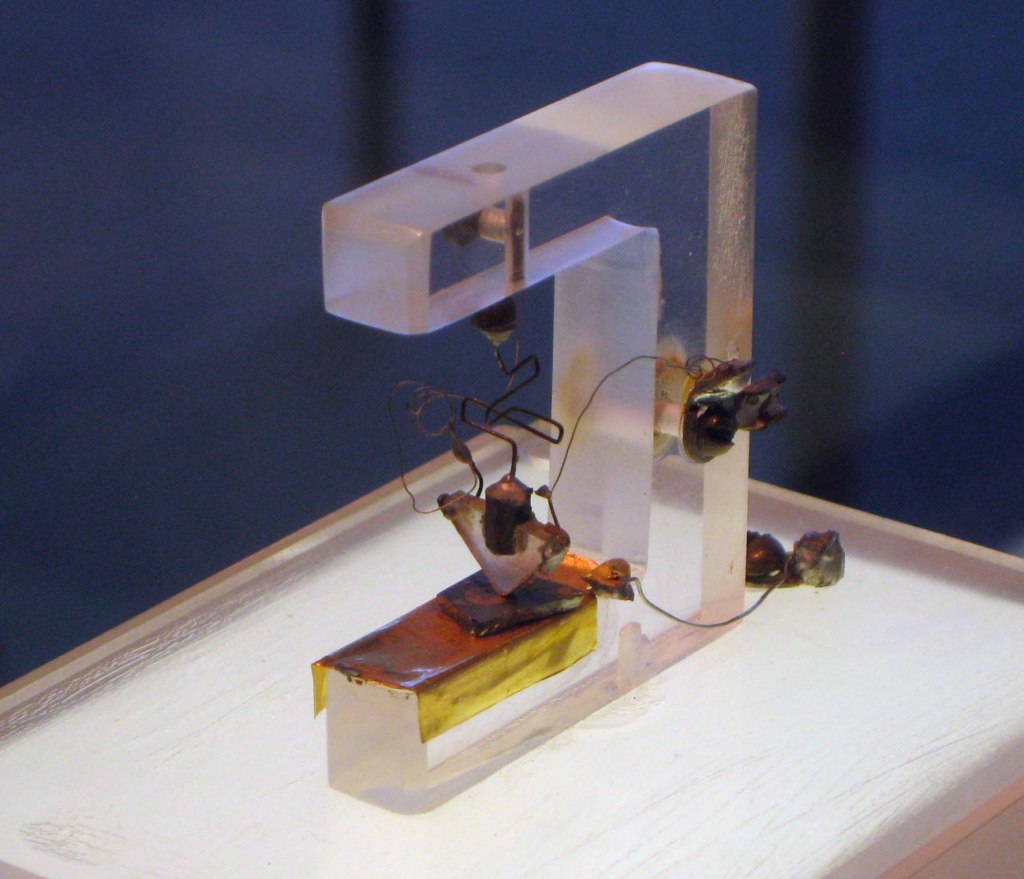

On December 23, 1947, three Bell Telephone Labs scientists (William Shockley, John Bardeen, and Walter Brattain) invented the first solid-state transistor. It was an amplifying device that didn’t require the heating element or high voltages needed by the vacuum tubes. They won the Nobel Prize for this discovery.

According to a book authored by Bardeen and Brattain, the size (active area) of the world’s first solid-state germanium transistor was about .025 cm or 250,000 nanometers (nm). For comparison, that hair on the top of your head is 100,000 nm.

It took about a decade for manufacturers like Raytheon to mass-produce a working transistor. Just like the Bell Labs prototype, Raytheon built the transistor on a tiny slab of germanium. The transistor they designed for hearing aids was the CK718. Fortunately, Raytheon sold CK718 transistor fall-outs to the hobby electronics market as the CK722. They still worked but didn’t quite meet the CK718 quality tests. The price was 99 cents in 1956.

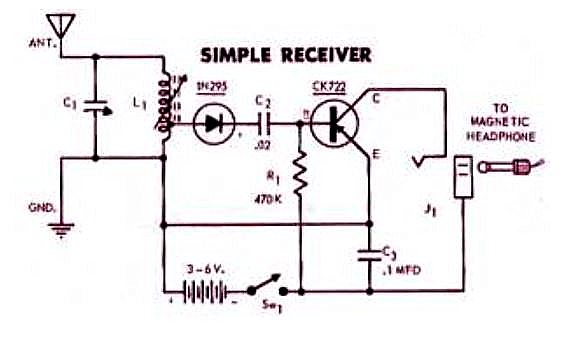

In 1956, I was 11 years old, living in Fort Mitchell, Kentucky, and fascinated with electronics. I had a paper route and used the money to buy tools (soldering gun, screwdrivers, long nose pliers, headphones, and solder). I saw this construction project in a magazine, maybe Popular Mechanics.

So I took the bus across the Ohio River into Cincinnati, where my Father worked. He was a Division Superintendent of the Chesapeake and Ohio Railway (C&O). Using a city bus to get to a Radio Supply store listed in the phone book, the sign over the store said: “Wholesale Only.” Hesitating to go through the front door, I wasn’t sure if they would even talk to me.

Quite to the contrary, they were fascinated with the very young kid wanting to build a one-transistor radio. I mispronounced everything. “No, son, that’s capacitor, not capaci-tater.” “No, son, that’s diode, not dio-dee.” The store had everything I needed: the vari-loopstick adjustable inductor, the adjustable capacitor, battery holder, resistors, capacitors, diode, transistor, Fahnestock clips, and so on. So, I went home and built the thing. It probably looked something like this.

And it worked! By adjusting the inductor and the capacitor, I was able to tune in to Cincinnati’s most powerful radio station. I still remember how exhilarated I was when I first heard the music on my headphones. This moment was the beginning of my lifelong love affair with transistors, computers, and electronics.

So, just how big was that 1956 CK722 transistor? While not specified on the Web, I suspect the CK722 transistor to be at least 250,000 nanometers (nm), just like the Bell Labs prototype. That’s pretty big, by today’s standards.

In the years that followed, semiconductor companies were created to make even more complex transistor-based devices. They learned how to put multiple transistors, resistors, and capacitors on a silicon slab (substrate) and wire them up. These were called integrated circuits, and the race was on to make them more and more complex. In addition to applications in radios and televisions, transistors were perfect for the logic elements required to build computers (logic gates, flip-flops, adders, shifters, and so on).

When I started my first job in 1968 at the Boeing Space Center in Kent, Washington, they were building a spacecraft attitude control computer with these new “integrated circuits.” Boeing hired me to work on this experimental computer and thus gave me my start in programming and digital logic design.

Flash forward to 1985. I worked for my 3rd company, Mennen Medical, in Clarence, New York (a suburb of Buffalo). Herb Mennen was a business partner with Wilson Greatbatch, the inventor of the pacemaker. Mennen took me to lunch once with Wilson Greatbatch; he was a gentle, soft-spoken genius. Today, a Medtronic pacemaker is keeping me alive. It’s a small world!

My job was Head of Software Development for the Mennen Horizon 2000 Patient Monitor, the first digital patient monitor that used a color display.

This Horizon 2000 monitor can be seen in the 1988 Arnold Schwarzenegger film “Red Heat.” It replaced a Mennen Medical analog heart monitor that appears at the very end of the 1985 “Re-Animator” horror movie.

My boss, Yossi Elaz from Israel, designed two computer boards for the product. One would collect the signals from the ECG pads, blood pressure sensors, saturated oxygen detectors (SAO2), and so forth. The other computer board would create the color displays and the menus (as dictated by the marketing department).

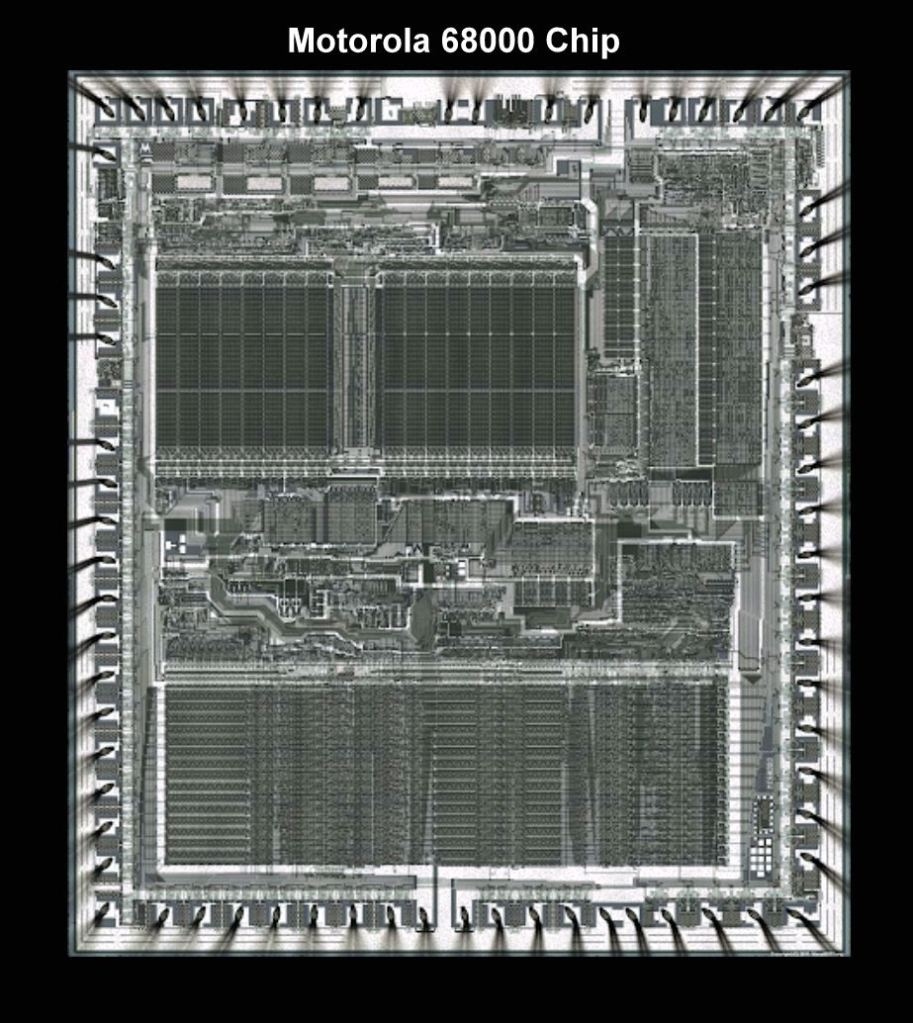

For these computer boards, we decided to use the Motorola 68000 microcomputer chip. This chip was the best available, capable of doing 32-bit arithmetic and addressing 16 megabytes of memory. OK, in the interest of full disclosure, we had to add eight memory chips and a couple of input/output port chips to make a working microcomputer. Still, these boards were pushing the state-of-the-art.

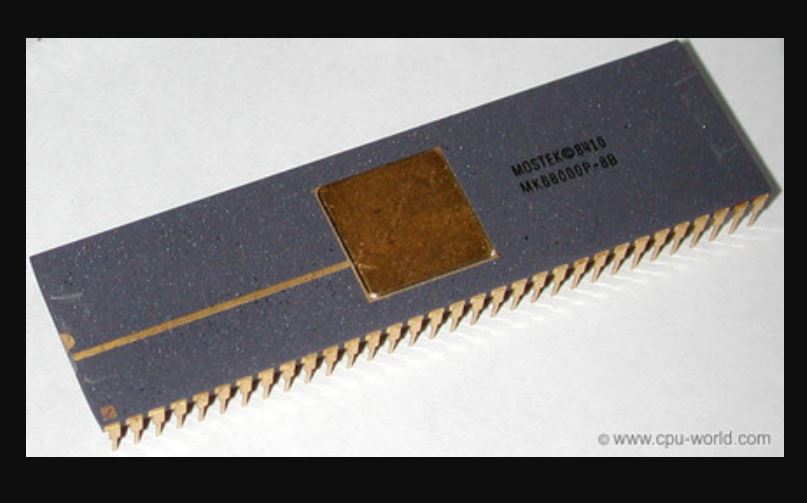

By Pauli Rautakorpi – Own work, CC BY 3.0, https://commons.wikimedia.org/w/index.php?curid=31671531

1985 Motorola 68000 package

So why was it called the 68000? The chip had 68,000 transistors! Each transistor (MOSFETS in this case) was 3500 nanometers (nm) in size. Do you see where this is going?

Many companies designed and fabricated computers on a chip in the eighties and nineties, but they also had to provide software tools to program these devices. Those software development tools were very expensive. Eventually, in 2011, a British company called ARM developed an efficient microcomputer design plus all the open-source (free) software tools needed, which they then licensed to everybody. As a result, there are today over 180 billion ARM-derived computer chips in use worldwide.

Flash forward again to today (2021). I retired from engineering six years ago and am now living in Florida. If I were to buy an iPhone 12 Pro Max, the 256-gigabyte model would cost about $1099. As an example, the size of the Wikipedia database is about 20 gigabytes and could easily fit on this phone.

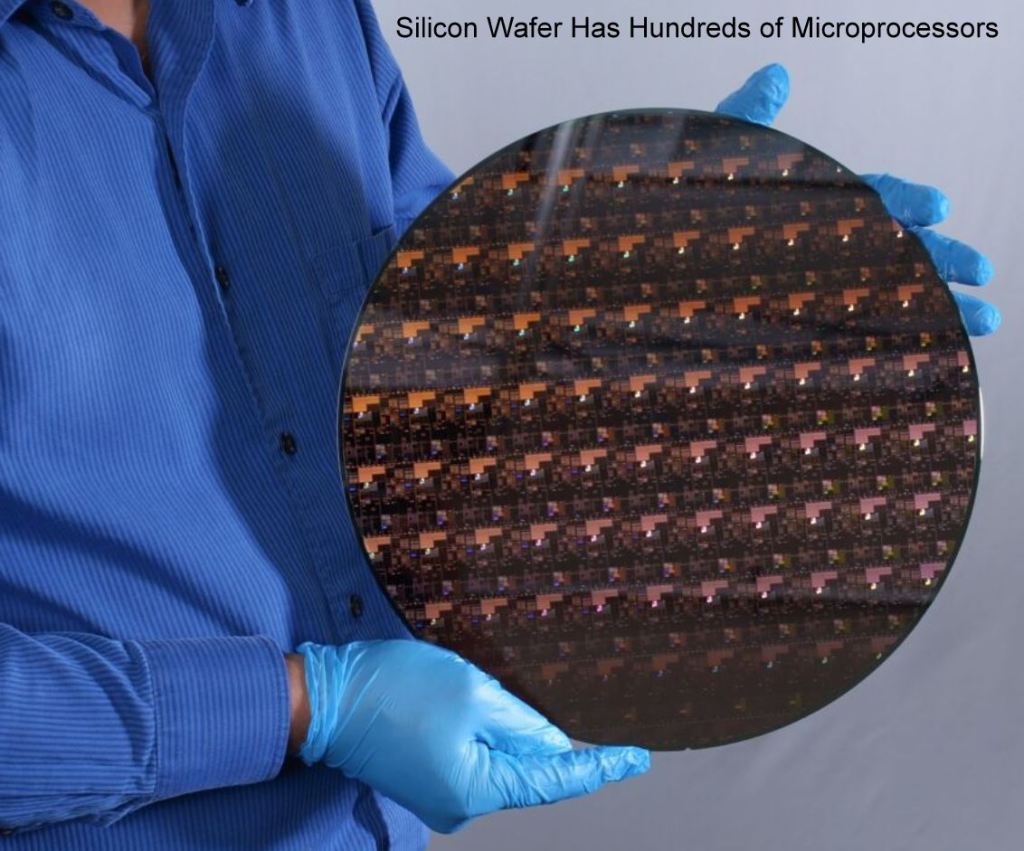

The iPhone 12 Pro Max uses the Apple-designed A14 Bionic chip. It’s an ARM 64-bit system-on-a-chip that has a six-core processor (means it can execute six algorithms simultaneously). In addition, there are two other processors, a graphics engine and a neural network engine. The transistors are 5 nanometers (nm) in size, and there are 11.8 billion of them. The Taiwan Semiconductor Manufacturing Company builds these chips for Apple.

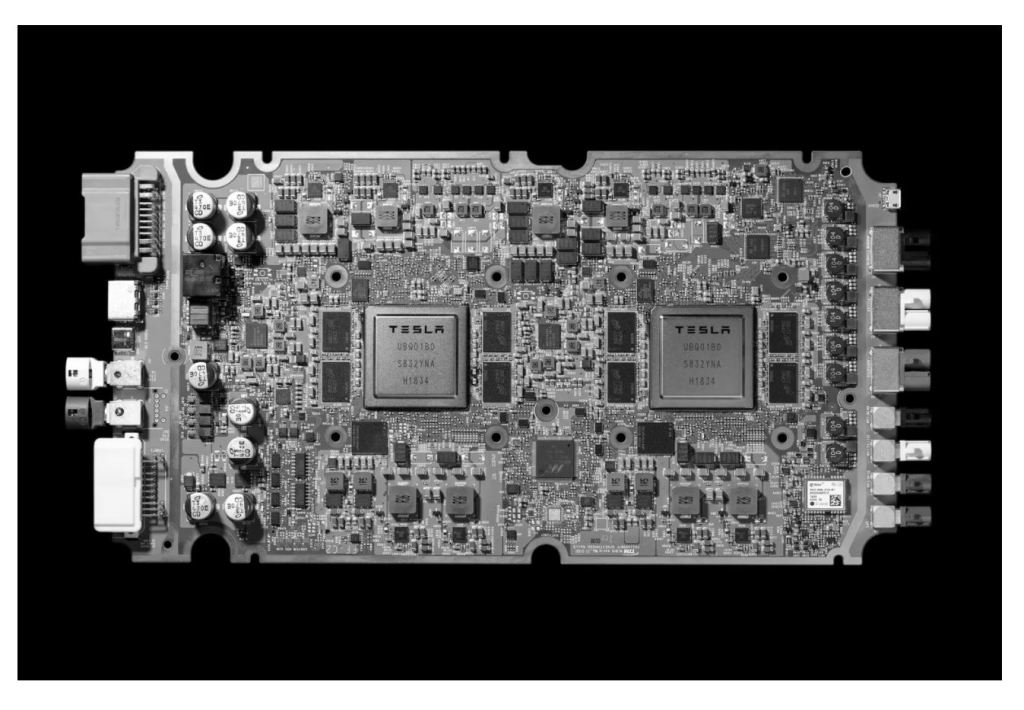

Two years ago, Elon Musk’s company, Tesla, built a similar supercomputer-on-a-chip for the Full Self Driving (FSD) system for Tesla vehicles. It’s also an ARM 64-bit system-on-a-chip that has multiple core processors and neural engines. Samsung built the Tesla FSD chip with transistors that are 14 nanometers (nm). So the Tesla chip has only 6 billion transistors. There are two of these in a Tesla vehicle, running the Full Self Driving software.

IBM creates some of the world’s most sophisticated computer systems. They also design remarkable semiconductors and integrated circuits. Oddly, they’re not interested in mass-producing these advanced semiconductors. Instead, they first develop the breakthrough transistors, integrated circuits, and the industrial equipment needed to fabricate them. Once they prove that the new design works, they license the technology to Samsung and Intel. These partners integrate the IBM equipment into their factories and supply IBM and other customers with the new generation chips.

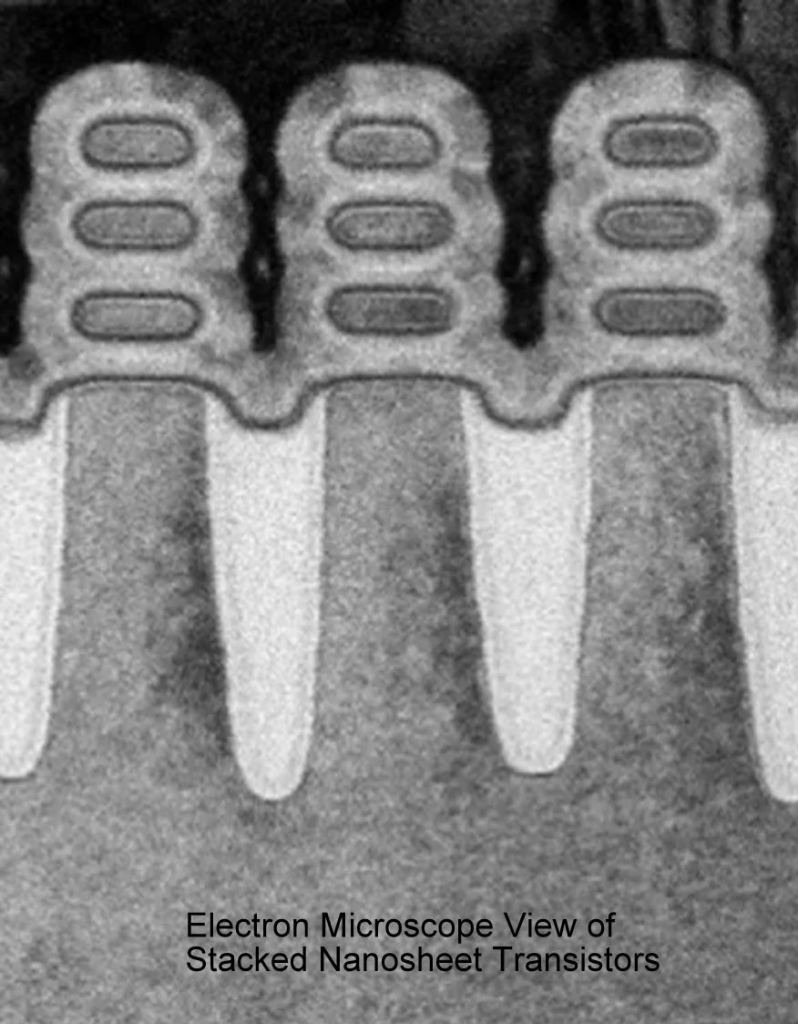

So last May, IBM shocked the semiconductor industry by announcing that they had developed a “nanosheet” transistor that was only 2 nm in size. It also had an operating voltage of less than 1.0 volts (the Motorola 68000 chip in 1985 ran on 5 volts). IBM showed circuits on a fingernail-sized chip that have 50 billion transistors.

The big breakthrough was building the transistor taller with fins (and using some exotic coatings such as Hafnium).

Is a 2 nm transistor a big deal?

Consider what happens when you look for a picture on Facebook or make a query to Google. They’ll tell you that it goes to the “Cloud” for processing, but the request is sent to a Data Center. The Data Center is just a warehouse full of PC-class computers holding the data. Yes, they call them “Servers,” but these are just personal computer (PC) motherboards with hard drives optimized for Internet connectivity and rapid look-up of stored data (photos, movies, facts). It’s estimated that there are 1.4 billion servers in these Data Centers worldwide.

These “Cloud” data centers generate so much heat that many are near the ocean or lakes where cooling water is plentiful.

The amount of data stored today on all these “Cloud” data centers is 44 zetabytes. One zetabyte can hold 1,320 billion 4K movies. These servers usually include a ten terabyte hard disk drive, which adds to the heat generated. If solid-state transistorized memory chips can replace that mechanical device (hard disk drive), the power consumed in the data center can be significantly reduced.

Researchers have suggested that developing a 1 nm transistor using bismuth as the substrate might be possible. However, it will probably take ten years to perfect this technology.

Will these 2 nm transistors effect your life in a positive way over the next decade?

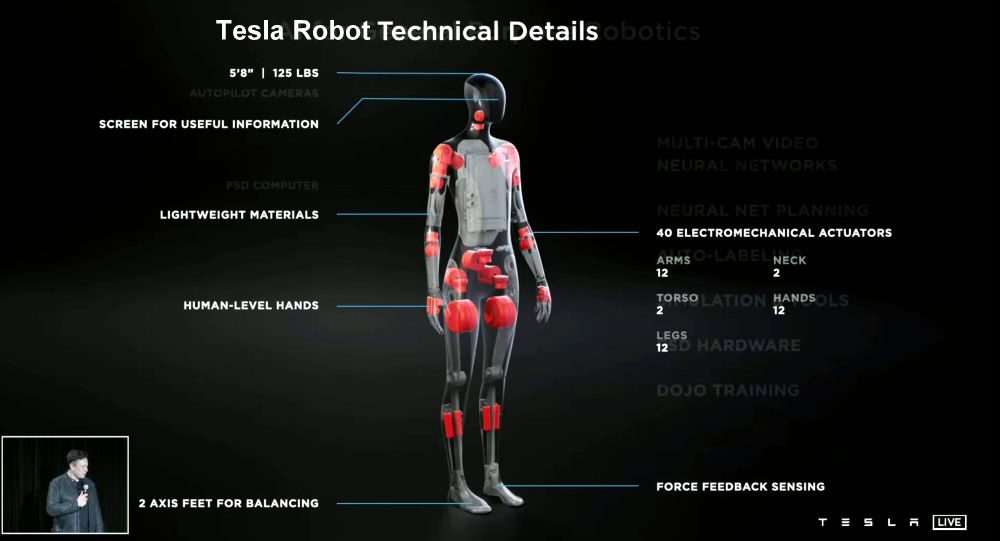

Your laptops and smartphones will run four days before recharging. Your smartphone will be able to do perfect language translation when you’re traveling abroad or viewing a foreign movie. Your digital watch will alert your physician if your vital signs deviate from nominal. Your Internet response will be nearly instant; your 4K movies will never pause to catch up. Arrays of these supercomputers on a chip will be the basis of powerful Artificial Intelligence (AI) engines that will help find messenger mRNA-style vaccines for cancer, AIDs, and other disorders. Automobiles will do the driving for you using the onboard Autopilot software. We’ll start to see humanoid robots doing menial tasks around the house, such as cleaning up, taking out the trash, clearing the table, helping the elderly in and out of bed.

Do you think that last one is pure science fiction? Yesterday, Elon Musk announced that Tesla would be building a humanoid robot. It will be powered by the same computer system used in Tesla electric cars, enhanced by software from his AI team. Remember that the New York Stock Exchange floor is littered with the bodies of hedge fund managers who bet against Musk’s genius.

In 1956, I bought the first transistor available to the public. Soon there will be integrated circuits with 50 billion transistors on a sheet of silicon the size of my thumbnail.

The most important invention in the 18th century had to be the steam engine. It revolutionized agriculture and manufacturing. However, for the last 75 years, the most critical development has to be the transistor. It enabled the Internet, flat-screen televisions, smartphones, and many other life-changing devices that we take for granted.

To borrow a phrase from the seventies: “Transistors, You’ve come a long way, Baby!”